Over the past few years, Webtide has been working closely with Google to improve the usage of Jetty in the App Engine Java Standard Runtime. We have updated the GAE Java21 Runtime to use Jetty 12 with support for both EE8 and EE10 environments. In addition, a new HttpConnector mode has been added to increase the performance of all Java Runtimes, this is expected to result in significant cost savings from less memory and CPU usage.

Bypassing RPC Layer with HttpConnector Mode

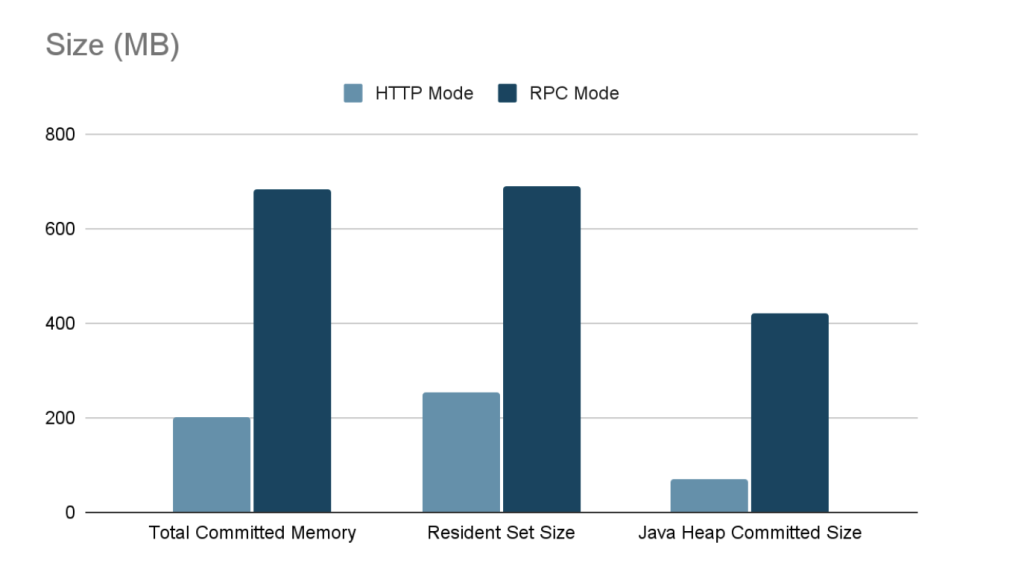

Recently, we implemented a new mode for the Java Runtimes which bypasses the legacy gRPC layer which was previously needed to support the GEN1 runtimes. This legacy code path allowed support of the GEN1 and GEN2 Runtimes simultaneously, but had significant overhead; it used two separate Jetty Servers, one for parsing HTTP requests and converting to RPC, and another using a custom Jetty Connector to allow RPC requests to be processed by Jetty. It also required the full request and response content to be buffered which further increased memory usage.

The new HttpConnector mode completely bypasses this RPC layer, thereby avoiding the overhead of buffering full request and response contents. Additionally, it removes the necessity of starting a separate Jetty Server, further reducing overheads and streamlining the request-handling process.

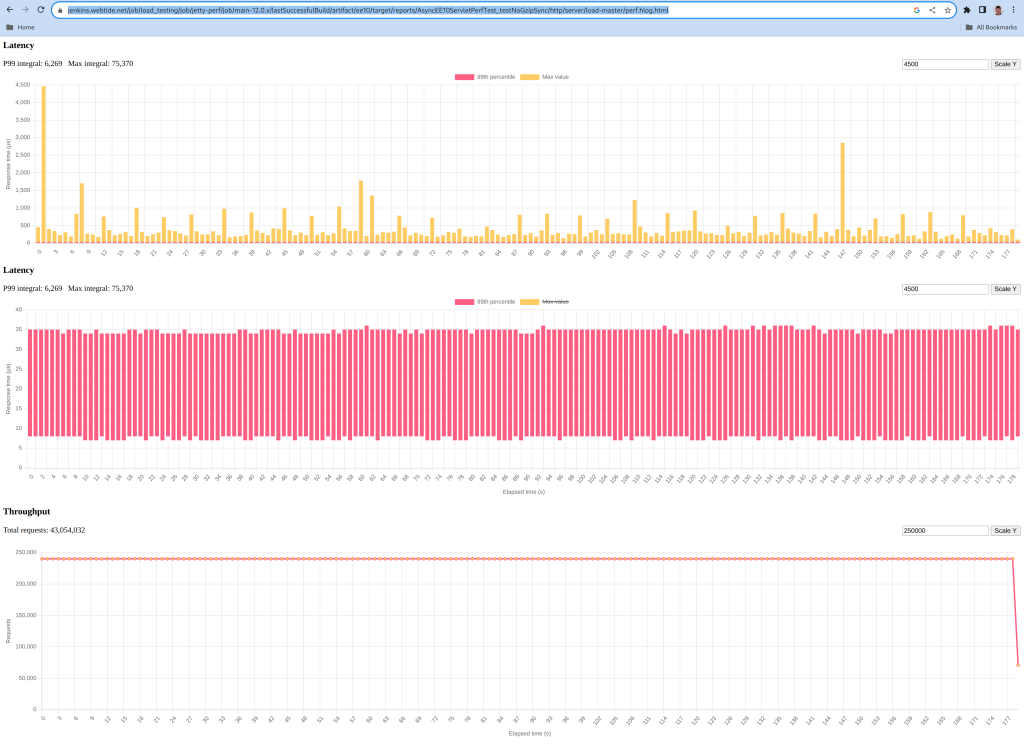

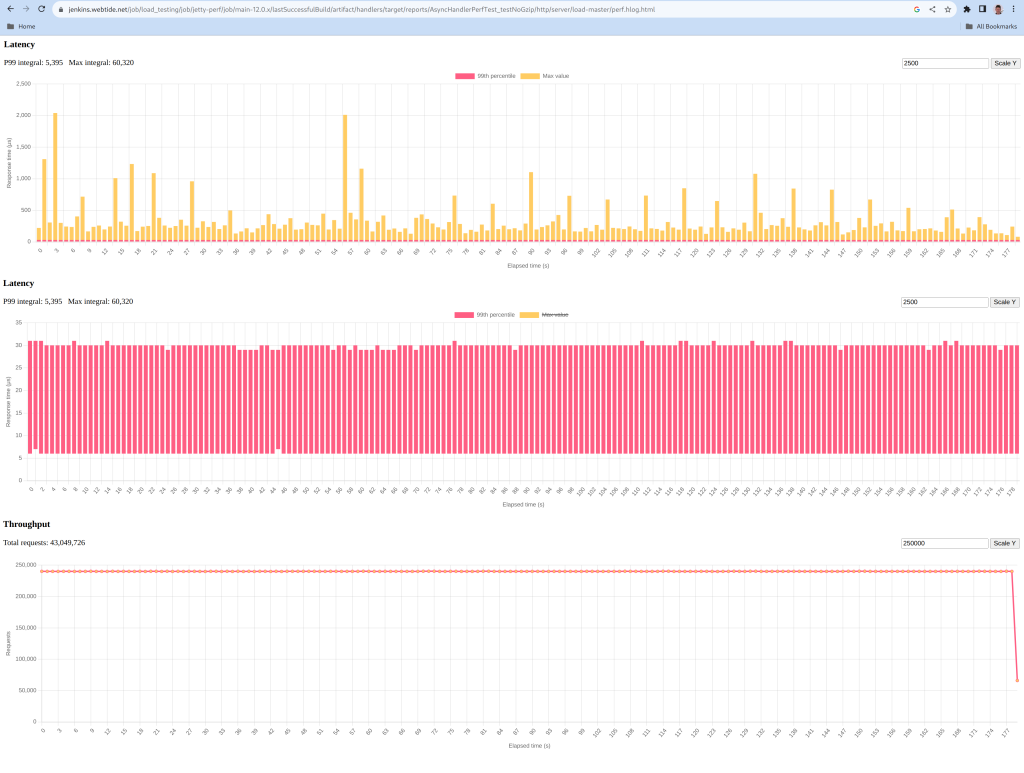

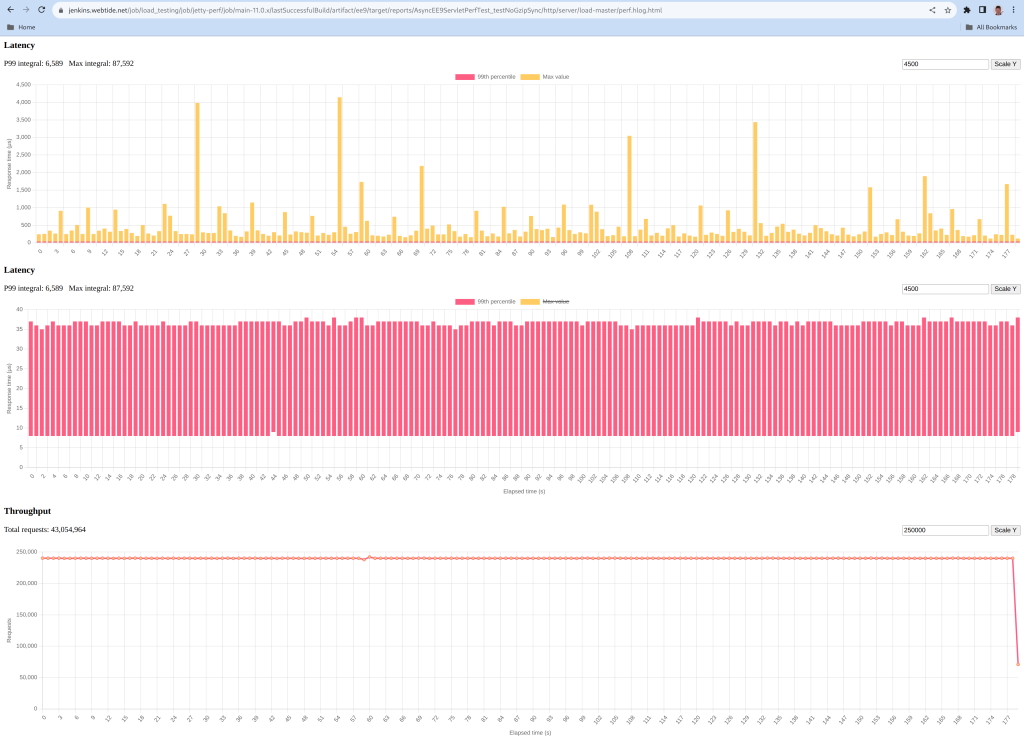

Benchmarks

Benchmarks conducted on the new HttpConnector mode have demonstrated significant performance improvements. Detailed results and documentation of these benchmarks can be found here.

Usage

To take advantage of the new HttpConnector mode, developers can set the appengine.use.HttpConnector system property in their appengine-web.xml file.

<system-properties>

<property name="appengine.use.httpconnector" value="true"/>

</system-properties>By adopting this configuration, developers can leverage the enhanced performance and efficiency offered by the new HttpConnector mode. This is available for all Java Runtimes from Java8 to Java21.

This mode is currently an optional configuration but future plans are to make this the default for all applications.