The websocket protocol has been touted as a great leap forward for bidirectional web applications like chat, promising a new era of simple comet applications. Unfortunately there is no such thing as a silver bullet and this blog will walk through a simple chat room to see where websocket does and does not help with comet applications. In a websocket world, there is even more need for frameworks like cometd.

Simple Chat

A chat is the “helloworld” application of web-2.0 and a simple websocket chat room is included with the jetty-7 which now supports websockets. The source of the simple chat can be seen in svn for the client side and server side. The key part of the client side is to establish a WebSocket connection:

join: function(name) { this._username=name; var location = document.location.toString().replace('http:','ws:'); this._ws=new WebSocket(location); this._ws.onopen=this._onopen; this._ws.onmessage=this._onmessage; this._ws.onclose=this._onclose; },

It is then possible for the client to send a chat message to the server:

_send: function(user,message){ user=user.replace(':','_'); if (this._ws) this._ws.send(user+':'+message); },

and to receive a chat message from the server and to display it:

_onmessage: function(m) { if (m.data){ var c=m.data.indexOf(':'); var from=m.data.substring(0,c).replace('<','<').replace('>','>'); var text=m.data.substring(c+1).replace('<','<').replace('>','>');

var chat=$('chat'); var spanFrom = document.createElement('span'); spanFrom.className='from'; spanFrom.innerHTML=from+': '; var spanText = document.createElement('span'); spanText.className='text'; spanText.innerHTML=text; var lineBreak = document.createElement('br'); chat.appendChild(spanFrom); chat.appendChild(spanText); chat.appendChild(lineBreak); chat.scrollTop = chat.scrollHeight - chat.clientHeight; } },

For the server side, we simply accept incoming connections as members:

public void onConnect(Outbound outbound) { _outbound=outbound; _members.add(this); }

and then for all messages received, we send them to all members:

public void onMessage(byte frame, String data) { for (ChatWebSocket member : _members) { try { member._outbound.sendMessage(frame,data); } catch(IOException e) { Log.warn(e); } } }

So we are done right? We have a working chat room – let’s deploy it and we’ll be the next google gchat!! Unfortunately reality is not that simple and this chat room is a long way short of the kinds of functionality that expect from a chat room – even a simple one.

Not So Simple Chat

On Close?

With a chat room, the standard use-case is that once you establish your presence in the room and it remains until you explicitly leave the room. In the context of webchat, that means that you can send receive a chat message until you close the browser or navigate away from the page. Unfortunately the simple chat example does not implement this semantic because the websocket protocol allows for an idle timeout of the connection. So if nothing is said in the chat room for a short while then the websocket connection will be closed, either by the client, the server or even and intermediary. The application will be notified of this event by the onClose method being called.

So how should the chat room handle onClose? The obvious thing to do is for the client to simply call join again and open a new connection back to the server:

_onclose: function() { this._ws=null; this.join(this.username); }

This indeed maintains the user’s presence in the chat room, but is far from an ideal solution since every few idle minutes the user will leave the room and rejoin. For the short period between connections, they will miss any messages sent and will not be able to send any chat themselves.

Keep Alives

In order to maintain presence, the chat application can send keep-alive messages on the websocket to prevent it being closed due to an idle timeout. However, the application has no idea at all about what the idle timeout are, so it will have to pick some arbitrary frequent period (eg 30s) to send keep-alives and hope that is less than any idle timeout on the path (more or less as long-polling does now). Ideally a future version of websocket will support timeout discovery, so it can either tell the application the period for keep-alive messages or it could even send the keep alives on behalf of the application.

Unfortunately keep-alives don’t avoid the need for onClose to initiate new Websockets, because the internet is not a perfect place and specially with wifi and mobile clients, sometimes connections just drop. It is a standard part of HTTP that if a connection closes while being used, the GET requests are retried on new connections, so users are mostly insulated from transient connection failures. A websocket chat room needs to work with the same assumption and even with keep-alives, it needs to be prepared to reopen a connection when onClose is called.

Queues

With keep alives, the websocket chat connection should be mostly be a long lived entity, with only the occasional reconnect due to transient network problems or server restarts. Occasional loss of presence might not been seen to be a problem, unless you’re the dude that just typed a long chat message on the tiny keyboard of your vodafone360 app or instead of chat you are playing on chess.com and you don’t want to abandon a game due to transient network issues. So for any reasonable level of quality of service, the application is going to need to “pave over” any small gaps in connectivity by providing some kind of message queue in both client and server. If a message is sent during the period of time that there is no websocket connection, it needs to be queued until such time as the new connection is established.

Timeouts

Unfortunately, some failures are not transient and

sometimes a new connection will not be established. We can’t allow queues to grow for ever and to pretend that a user is present long after their connection is gone. Thus both ends of the

chat application will also need timeouts and user will not be seen to

have left the chat room until they have no connection for the period

of the timeout or until an explicit leaving message is received.

Ideally

a future version of websocket will support an orderly close message so

the application can distinguish between a network failure (and keep the

user’s presence for a time) and an orderly close as the user leaves the page (and

remove the user’s present).

Message Retries

Even with message queues, there is a race condition that makes it difficult to completely close the gaps between connections. If the onClose method is called very soon after a message is sent, then the application has no way to know if that close happened before or after the message was delivered. If quality of service is important, then the application currently has not option but to have some kind of per message or periodic acknowledgement of message delivery. Ideally a future version of websocket will support orderly close, so that delivery can be known for non failed connections and a complication of acknowledgements can be avoided unless the highest quality of service is required.

Backoff

With onClose handling, keep-alives, message queues, timeouts and retries, we finally will have a chat room that can maintain a users presence while they remain on the web page. But unfortunately the chat room is still not complete, because it needs to handle errors and non transient failures. Some of the circumstances that need to be avoided include:

- If the chat server is shut down, the client application is notified of this simply by a call to onClose rather than an onOpen call. In this case, onClose should not just reopen the connection as a 100% CPU busy loop with result. Instead the chat application has to infer that there was a connection problem and to at least pause a short while before trying again – potentially with a retry backoff algorithm to reduce retries over time. Ideally a future version of websocket will allow more access to connection errors, as the handling of no-route-to-host may be entirely different to handling of a 401 unauthorized response from the server.

- If the user types a large chat message, then the websocket frame sent may exceed some resource level on the client, server or intermediary. Currently the websocket response to such resource issues is to simply close the connection. Unfortunately for the chat application, this may look like a transient network failure (coming after a successful onOpen call), so it may just reopen the connection and naively retry sending the message, which will again exceed the max message size and we can lather, rinse and repeat! Again it is important that any automatic retries performed by the application will be limited by a backoff timeout and/or max retries. Ideally a future version of websocket will be able to send an error status as something distinct from a network failure or idle timeout, so the application will know not to retry errors.

Does it have to be so hard?

The above scenario is not the only way that a robust chat room could be developed. With some compromises on quality of service and some good user interface design, it would certainly be possible to build a chat room with less complex usage of a WebSocket. However, the design decisions represented by the above scenario are not unreasonable even for chat and certainly are applicable to applications needing a better QoS that most chat rooms.

What this blog illustrates is that there is no silver bullet and that WebSocket will not solve many of the complexities that need to be addressed when developing robust comet web applications. Hopefully some features such as keep alives, timeout negotiation, orderly close and error notification can be build into a future version of websocket, but it is not the role of websocket to provide the more advanced handling of queues, timeouts, reconnections, retries and backoffs. If you wish to have a high quality of service, then either your application or the framework that it uses will need to deal with these features.

Cometd with Websocket

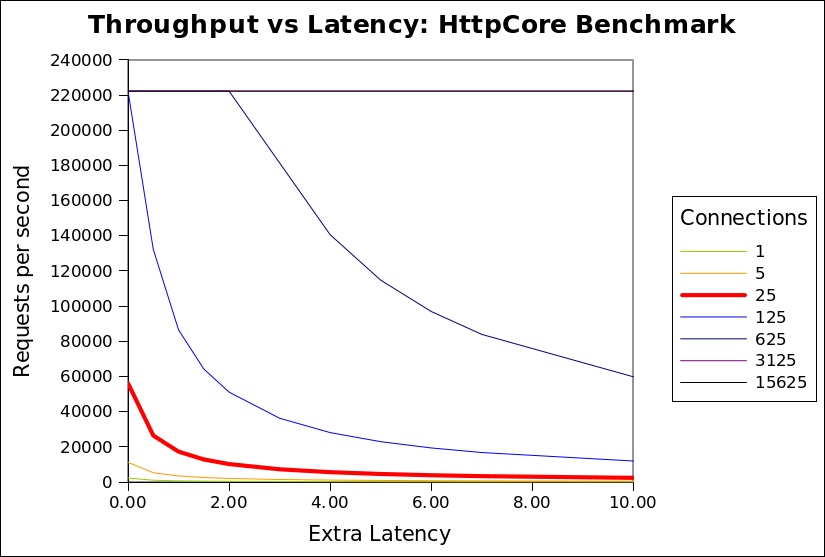

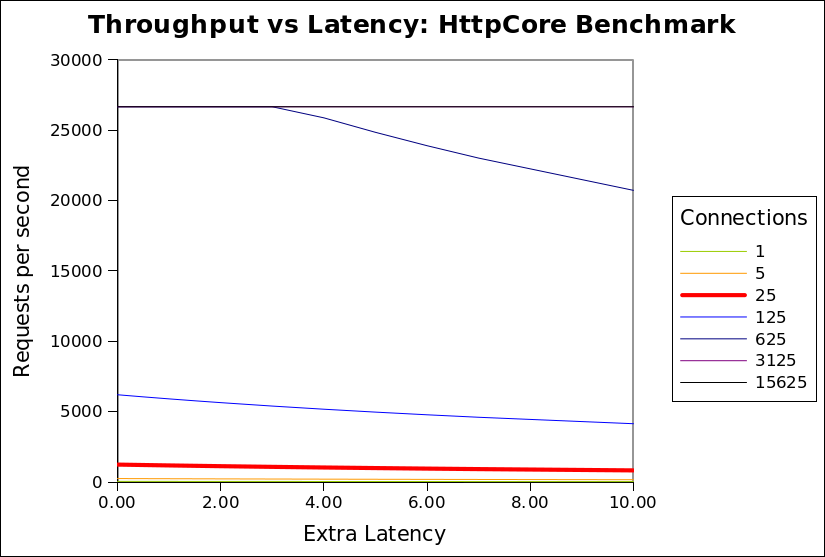

Cometd version 2 will soon be released with support for websocket as an alternative transport to the currently supported JSON long polling and JSONP callback polling. Cometd supports all the features discussed in this blog and makes them available transparently to browsers with or without websocket support. We are hopeful that websocket usage will be able to give us even better throughput and latency for cometd than the already impressive results achieved with long polling.