Now that the HTTP/2 specification is in its final phases of approval, big players announced that they will remove support for SPDY in favor of long term support of HTTP/2 (Chromium blog). We expect others to follow soon.

Based on this trend and feedback from users the Jetty Project is announcing that it will drop support for SPDY in Jetty 9.3.x, replacing its functionalities with HTTP/2. We have milestone builds available for Jetty 9.3.0 now if you would like to try them out, they can be downloaded through Maven Central now. A new milestone release will be released shortly followed by a full release once the specification is finalized.

The SPDY protocol will remain supported in the Jetty 9.2.x series, but no further work will be done on it unless it is sponsored by a client. This will allow us to concentrate fully on a first class quality implementation of HTTP/2.

Along these same lines, Jetty 9.3 will drop support for NPN (the TLS Next Protocol Negotiation Extension), replacing its functionalities with ALPN (the TLS Application Layer Protocol Negotiation Extension, RFC 7301). NPN should remain supported in the Jetty 9.2.x series, and updated as new JDK 7 versions will be released.

Contact us if you are interested in migrating your existing SPDY solutions to HTTP/2.

Category: Jetty

-

Phasing out SPDY support

-

JavaOne 2014 Servlet 3.1 Async I/O Session

Greg Wilkins gave the following session at JavaOne 2014 about Servlet 3.1 Async I/O.

It’s a great talk in many ways.

You get to know from an insider of the Servlet Expert Group about the design of the Servlet 3.1 Async I/O APIs.

You get to know from the person the created, developed Jetty and implemented Servlet specifications for 19 years what are the gotchas of these new APIs.

You get to know many great insights on how to write correct asynchronous code, and believe it – it’s not as straightforward as you think !

You get to know that Jetty 9 supports Servlet 3.1 and you should start using it today 🙂

Enjoy !

-

Jetty 7 and Jetty 8 – End of Life

Five years ago we migrated the Jetty project from The Codehaus to the Eclipse Foundation. In that time we have pushed out 101 releases of Jetty 7 and Jetty 8, double that if you count the artifacts that had to remain at the Codehaus for the interim.

Four years ago we ceased open source support for Jetty 6.

Two years ago we released the first milestone of Jetty 9 and there have been 34 releases since. Jetty 9 has been very well received and feedback on it has been overwhelmingly positive from both our client community and the broader open source community. We will continue to improve upon Jetty 9 for years to come and we are very excited to see how innovative features like HTTP/2 support play out as these rather fundamental changes take root. Some additional highlights for Jetty 9 are: Java 7+, Servlet 3.1+, JSR 356 WebSocket, and SPDY! You can read more about Jetty 9.2 from the release blog here. Additionally we will have Jetty 9.3 releasing soon which contains support for HTTP/2!

This year will mark the end of our open source support for Jetty 7 and Jetty 8. Earlier this week we pushed out a maintenance release that only had a handful of issues resolved over the last five months so releases have obviously slowed to a trickle. Barring any significant security related issue it is unlikely we will see more then a release or two remaining on Jetty 7 and Jetty 8. We recommend users update their Jetty versions to Jetty 9 as soon as they are able to work it into their schedule. For most people we work with, the migration has been trivial, certainly nothing on the scale of the migration between foundations.

Important to note is that this is strictly regarding open source support. Webtide is the professional services arm of the Jetty Project and there will always be active professional developer and production support available for clients on Jetty 7 and Jetty 8. We even have clients with Jetty 6 support who have been unable to migrate for a host of other reasons. The services and support we provide through Webtide are what fund the ongoing development of the Jetty platform. We have no licensed version of Jetty that we try and sell through to enterprise users, the open source version of Jetty is the professional version. Feel free to explore the rest of this site to learn more about Webtide and if you have any questions feel free to comment, post to the message to the mailing lists, or fill out the contact form on this site (and I will follow up!).

-

Jetty @ JavaOne 2014

I’ll be attending JavaOne Sept 29 to Oct 1 and will be presenting several talks on Jetty:

- CON2236 Servlet Async IO: I’ll be looking at the servlet 3.1 asynchronous IO API and how to use it for scale and low latency. Also covers a little bit about how we are using it with http2. There is an introduction video but the talk will be a lot more detailed and hopefully interesting.

- BOF2237 Jetty Features: This will be a free form two way discussion about new features in jetty and it’s future direction: http2, modules, admin consoles, dockers etc. are all good topics for discussion.

- CON5100 Java in the Cloud: This is primarily a Google session, but I’ve been invited to present the work we have done improving the integration of Jetty into their cloud offerings.

I’ll be in the Bay area from the 23rd and I’d be really pleased to meet up with Jetty users in the area before or during the conference for anything from an informal chat/drink/coffee up to the full sales pitch of Intalio|Webtide services – or even both!) – <gregw@intalio.com>

- CON2236 Servlet Async IO: I’ll be looking at the servlet 3.1 asynchronous IO API and how to use it for scale and low latency. Also covers a little bit about how we are using it with http2. There is an introduction video but the talk will be a lot more detailed and hopefully interesting.

-

HTTP/2 Interoperability and HTTP/2 Push

Following my previous post, several players tried their HTTP/2 implementation of draft 14 (h2-14) against webtide.com.

A few issues were found and quickly fixed on our side, and this is very good for interoperability.

Having worked many times at implementing specifications, I know that different people interpret the same specification in slightly different ways that may lead to incompatibilities.

@badger and @tatsuhiro_t reported thatcurl + nghttp2is working correctly against webtide.com.

On the Firefox side, @todesschaf reported a couple of edge cases that were fixed, so expect a Firefox nightly soon (if not already out?) that supports h2-14.

We are actively working at porting the SPDY Push implementation to HTTP/2, and Firefox should already support HTTP/2 Push, so there will be more interoperability testing to do, which is good.

This work is being done in conjunction with an experimental Servlet API so that web applications will be able to tell the container what resources should be pushed. This experimental push API is scheduled to be defined by the Servlet 4.0 specification, so once again the Jetty project is leading the path like it did for async Servlets, SPDY and SPDY Push.

Why you should care about all this ?

Because SPDY Push can boost your website performance, and more performance means more money for your business.

Interested ? Contact us. -

HTTP/2 Last Call!

The IETF HTTP working group has issued a last call for comments on the proposed HTTP/2 standard, which means that the process has entered the final stage of open community review before the current draft may become an RFC. Jetty has implemented the proposal already and this website is running it already! There is a lot of good in this proposed standard, but I have some deep reservations about some bad and ugly aspects of the protocol.

The Good

HTTP/2 is a child born of the SPDY protocol developed by Google and continues to seek the benefits that have been illuminated by that grand experiment. Specifically:

- The new protocol supports the same semantics as HTTP/1.1 which was recently clarified by RFC7230. This will allow most of the benefits of HTTP/2 to be used by applications transparently simply by upgrading client and server infrastructure, but without any application code changes.

- HTTP/2 is a multiplexed protocol that allows multiple request/response streams to share the same TCP/IP connection. It supports out of order delivery of responses so that it does not suffer from the same Head of Line Blocking issues as HTTP/1.1 pipeline did. Clients will no longer need multiple connections to the same origin server to ensure good quality of service when rendering a page made from many resources, which means a very significant savings in resources needed by the server and also reduces the sticky session problems for load balancers.

- HTTP headers are very verbose and highly redundant. HTTP/2 provides an effective compression algorithm (HPACK) that is tailored to HTTP and avoids many of the security issues with using general purpose compression algorithms over TLS connections. Reduced header size allows many requests to be sent over a newly opened TCP/IP connection without the need to wait for it’s congestion control window to grow to the capacity of the link. This significantly reduces the number of network round trips required to render a page.

- HTTP/2 supports pushed resources, so that an origin server can anticipate requests for associated resources and push them to the clients cache, again saving further network round trips.

You can see from these key features, that HTTP/2 is primarily focused on improving the speed to render a page, which is (as the book of speed points out) a good focus to have. To a lesser extent, the process has also considered through put and server resources, but these have not been key drivers and indeed data rates may even suffer under HTTP/2 and servers need to commit more resources to each connection which may consume much of the savings from fewer connections.

The Bad

While the working groups was chartered to address the misuse of the underlying transport occurring in HTTP/1.1 (eg long polling), it did not make much of the suggestion to coordinate with other working groups regarding the possible future extension of HTTP/2.0 to carry WebSockets semantics. While a websocket over http2 draft has been written, some of the features that draft referenced have subsequently been removed from HTTP/2 and the protocol is primarily focused on providing HTTP semantics.

The proposed protocol does not have a clear separation between a framing layer and the HTTP semantics that can be carried by that layer. I was expecting to see a clear multiplexed, flow controlled framing layer that could be used for many different semantics including HTTP and webSocket. Instead we have a framing protocol aimed primarily at HTTP which to quote the drafts editor:

“What we’ve actually done here is conflate some of the stream control functions with the application semantics functions in the interests of efficiency” — Martin Thomson 8/May/2014

I’m dubious there are significant efficiencies from conflating layers, but even it there are, I believe that such a design will make it much harder to carry WebSocket or other new web semantics over the http2 framing layer. HTTP semantics are hard baked into the framing so intermediaries (routers, hubs, load balancers, firewalls etc.) will be deployed that will have HTTP semantic hard wired. The only way that any future web semantic will be able to be transported over future networks will be to use the trick of pretending to be HTTP, which is exactly the kind of misuse of the underlying transport, that HTTP/2 was intended to address. I know it is difficult to generalise from one example, but today we have both HTTP and WebSocket semantics widely used on the web, so it would have been sensible to consider both examples equally when designing the next generation web framing layer.

An early version of the draft had a header compression algorithm that was highly stateful which meant that a single streams headers had to be encoded/decoded in total before another streams headers could be encoded/decoded. Thus a restriction was put into the protocol to prevent headers being transmitted as multiple frames interleaved with other streams frames. Furthermore, headers are excluded from the multiplexing flow control algorithm because once encoded transmission cannot be delayed without stopping all other encoding/decoding.

The proposed standard has a less stateful compression algorithm so that it is now technically possible to interleave other frames between a fragmented header. It is still not possible to flow control headers, but there is no technical reason that a large header should prevent other streams from progressing. However a concern about denial of service was raised in the working group, and while I argued that it was no worse than without interleaving, the working group was unable to reach consensus to remove the interleaving restriction.

Thus HTTP/2 has a significant incentive for applications to move large data into headers, as this data will effectively take control of the entire multiplexed connection and will be transmitted at full network speed regardless of any http2 flow control windows or other streams that may need to progress. If applications are take up these incentives, then the quality of service offered by the multiplexed connection will suffer and the Head of Line Blocking issue that HTTP/2 was meant to address will return as large headers will hit TCP/IP flow control and stop all streams. When this does happen, clients are likely to do exactly as they did with HTTP/1.1 and ignore any specifications about connection limits and just open multiple connections, so that requests can overtake others that are using large headers to try to take an unfair proportion of a shared connection. This is a catastrophic scenario for servers as not only will we have the increased resource required by HTTP/2 connections, but we will also have the multiple connections required by HTTP/1.

I would like to think that I’m being melodramatic here and predicting a disaster that will never happen. However the history from HTTP/1.1 is that speed is king and that vendors are prepared to break the standards and stress the servers so that applications appear to run faster on their browsers, even if it is only until the other vendors adopt the same protocol abuse. I think we are needlessly setting up the possibility of such a catastrophic protocol fail to protect against a DoS attack vector that must be defended anyway.

The Ugly

There are many aspect of the protocol design that can’t be described as anything but ugly. But unfortunately even though many in the working group agree that they are indeed ugly, the IETF process does not consider aesthetic appeal and thus the current draft is seen to be without issue (even though many have argued that the ugliness means that there will be much misunderstanding and poor implementations of the protocol). I’ll cite one prime example:

A classic case of design ugliness is the END_STREAM flag. The multiplexed streams are comprised of a sequence of frames, some of which can carry the END_STREAM flag to indicate that the stream is ending in that direction. The draft captures the resulting state machine in this simple diagram:

+--------+ PP | | PP ,--------| idle |--------. / | | \ v +--------+ v +----------+ | +----------+ | | | H | | ,---| reserved | | | reserved |---. | | (local) | v | (remote) | | | +----------+ +--------+ +----------+ | | | ES | | ES | | | | H ,-------| open |-------. | H | | | / | | \ | | | v v +--------+ v v | | +----------+ | +----------+ | | | half | | | half | | | | closed | | R | closed | | | | (remote) | | | (local) | | | +----------+ | +----------+ | | | v | | | | ES / R +--------+ ES / R | | | `----------->| |<-----------' | | R | closed | R | `-------------------->| |<--------------------' +--------+ H: HEADERS frame (with implied CONTINUATIONs) PP: PUSH_PROMISE frame (with implied CONTINUATIONs) ES: END_STREAM flag R: RST_STREAM frameThat looks simple enough, a stream is open until an END_STREAM flag is sent/received, at which stage it is half closed, and then when another END_STREAM flag is received/sent the stream is fully closed. But wait there’s more! A stream can continue sending several frame types after a frame with the END_STREAM flag set and these frames may contain semantic data (trailers) or protocol actions that must be acted on (push promises) as well as frames that can just be ignored. This introduces so much complexity that the draft requires 7 paragraphs of dense text to specify the frame handling that must be done once your in the Closed state! It is as if TCP/IP had been specified without CLOSE_WAIT. Worse yet, it is as if you could continue to send urgent data over a socket after it has been closed!

This situation has occurred because of the conflation of HTTP semantics with the framing layer. Instead of END_STREAM being a flag interpreted by the framing layer, the flag is actually a function of frame type and the specific frame type must be understood before the framing layer can consider any flags. With HTTP semantics, it is only legal to end some streams on some particular frame types, so the END_STREAM flag has only been put onto some specific frame types in an attempt to partially enforce good HTTP frame type sequencing (in this case to stop a response stream ending with a push promise). It is a mostly pointless attempt to enforce legal type sequencing because there are an infinite number of illegal sequences that an implementation must still check for and making it impossible to send just some sequences has only complicated the state machine and will make future non-HTTP semantics more difficult. It is a real WTF moment when you realise that valid meta-data can be sent in a frame after a frame with END_STREAM and that you have to interpret the specific frame type to locate the actual end of the stream. It is impossible to write general framing code that handles streams regardless of their type.

The proposed standard allows padding to be added to some specific frame types as a “security feature“, specifically to address “attacks where compressed content includes both attacker-controlled plaintext and secret data (see for example, [BREACH])“. The idea being that padding can be used to hide the affects of compression on sensitive data. But as the draft says “padding is a security feature; as such, its use demands some care” and it turns out to be significant care that is required:

- “Redundant padding could even be counterproductive.”

- “Correct application can depend on having specific knowledge of the data that is being padded.”

- “To mitigate attacks that rely on compression, disabling or limiting compression might be preferable to padding as a countermeasure.”

- “Use of padding can result in less protection than might seem immediately obvious.”

- “At best, padding only makes it more difficult for an attacker to infer length information by increasing the number of frames an attacker has to observe.”

- “Incorrectly implemented padding schemes can be easily defeated.”

So in short, if you are a security genius with precise knowledge of the payload then you might be able to use padding, but it will only slightly mitigate an attack. If you are not a security genius, or you don’t know your what your application payload data is (which is just about everybody), then don’t even think of using padding as you’ll just make things worse. Exactly how an application is meant to tunnel information about the security nature of its data down to the frame handling code of the transport layer is not indicated by the draft and there is no guidance to say what padding to apply other than to say don’t use randomized padding.

I doubt this feature will ever be used for security, but I suspect that it will be used for smuggling illicit data through firewalls.

What Happens Next?

This blog is not a call others to voice support for these concerns in the working group. The IETF process does not work like that, there are no votes and weight of numbers does not count. But on the other hand don’t let me discourage you from participating if you feel you have something to contribute other than numbers.

There has been a big effort by many in the working group to address the concerns that I’ve described here. The process has given critics fair and ample opportunity to voice concerns and to make the case for change. But despite months of dense debate, there is no consensus in the WG that the bad/ugly concerns I have outlined here are indeed issues that need to be addressed. We are entering a phase now where only significant new information will change the destiny of http/2, and that will probably have to be in the form of working code rather than voiced concerns (an application that exploits large headers to the detriment of other tabs/users would be good, or a DoS attack using continuation trailers).

Finally, please note that my enthusiasm for the Good is not dimmed by my concerns for the Bad and Ugly. The Jetty team is well skilled to deal with the Ugly for you and we’ll do our best to hide the Bad as well, so you’ll only see the benefits of the Good. Jetty-9.3 is currently available as a development branch and currently supports draft 14 of HTTP/2 and this website is running on it!. Work is under way on the current draft 14 and that should be supported in a few days. We are reaching out to users and clients who would like to collaborate on evaluating the pros/cons of this emerging standard.

-

HTTP/2 draft 14 is live !

Greg Wilkins (@gregwilkins) and I (@simonebordet) have been working on implementing HTTP/2 draft 14 (h2-14), which is the draft that will probably undergo the “last call” at the IETF.

We will blog very soon with our opinions about HTTP/2 (stay tuned, it’ll be interesting!), but for the time being Jetty proves once again to be a trailblazer when it comes with new web technologies and web protocols.

Jetty started to innovate with Jetty Continuations, that were standardized (with improvements) into Servlet 3.0.

Jetty was one of the first Java server to offer support for asynchronous I/O back in 2006 with Jetty 6.

In 2012 we were the first Java server to implement SPDY, we have written libraries that provide support for NPN in Java (that are now used by many other Java servers that provide SPDY support). We also were the first to implement a completely automatic way of leveraging SPDY Push, that can boost your web site performance.

Today, to my knowledge, we are again the first Java server exposing the implementation of the HTTP/2 protocol, draft 14, live on our own website.

Along with HTTP/2 support, that will be coming in Jetty 9.3, we have also implemented a library that provides support for ALPN in Java (the successor of NPN), allowing every Java application (client or server) to implement HTTP/2 over SSL. This library is already available in the Jetty 9.2.x series. We want other implementers (client and server) to test our HTTP/2 implementation in order to generate feedback about HTTP/2 that can be reported at the IETF.

As of today, both Mozilla Firefox and Google Chrome only support HTTP/2 draft 13 (h2-13). They are keeping the pace at implementing new drafts, so expect both browsers to offer draft 14 support in matter of days (in their nightly/unstable versions). When that will happen, you will be able to use those browsers to connect to our HTTP/2 enabled website.

The Jetty project offers not only a server, but a HTTP/2 client as well. You can take a look at how it’s used to connect to a HTTP/2 server here.

Where is it ? https://webtide.com.

Lastly, contact us for any news or information about what Jetty can do for you in the realms of async I/O, PubSub over the web (via CometD), SPDY and HTTP/2.

-

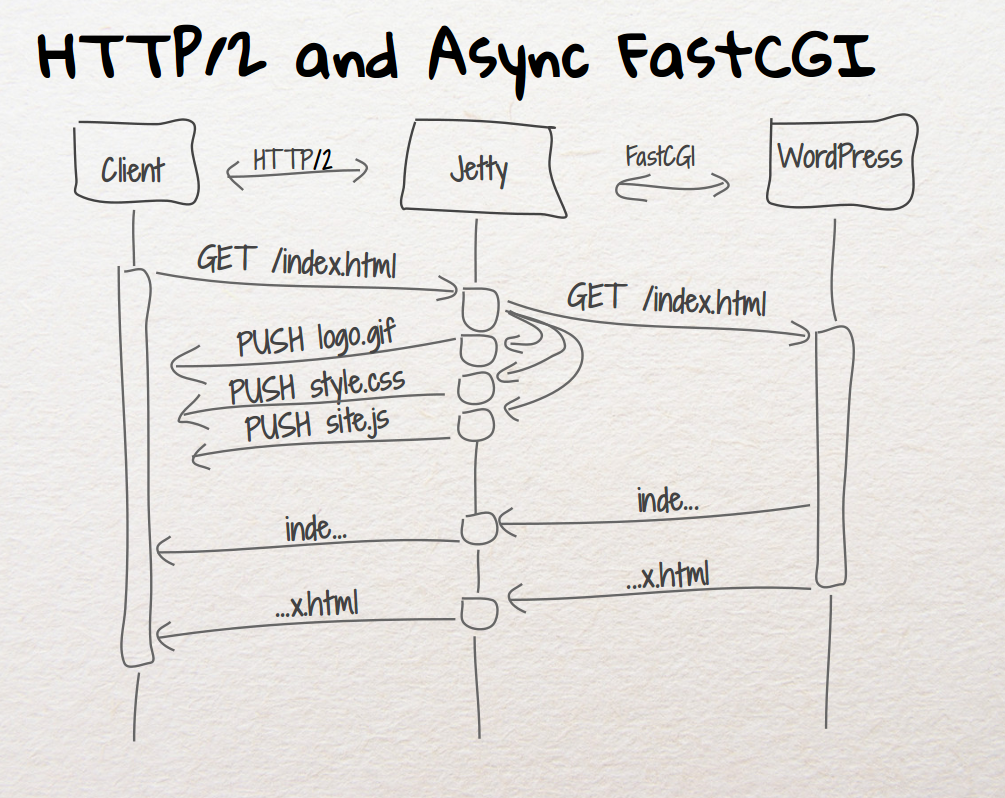

Jetty 9.1.4 Open Sources FastCGI Proxy

I wrote in the past about the support that was added to Jetty 9.1 to proxy HTTP requests to a FastCGI server.

A typical configuration to serve PHP applications such as WordPress or Drupal is to put Apache or Nginx in the front and have them proxy the HTTP requests to, typically,php-fpm(a FastCGI server included in the PHP distribution), which in turn runs the PHP scripts that generate HTML.

Jetty’s support for FastCGI proxying has been kept private until now.

With the release of Jetty 9.1.4 it is now part of the main Jetty distribution, released under the same license (Apache License or Eclipse Public License) as Jetty.

Since we like to eat our own dog food, Jetty is currently serving the pages of this blog (which is WordPress) using Jetty 9.1.4 and the newly released FastCGI module.

And it is doing so via SPDY, rather than HTTP, allowing you to serve Java EE Web Applications and PHP Web Applications from the same Jetty instance and leveraging the benefits that the SPDY protocol brings to the Web.

For further information and details on how to use this new module, please check the Jetty FastCGI documentation.

Enjoy ! -

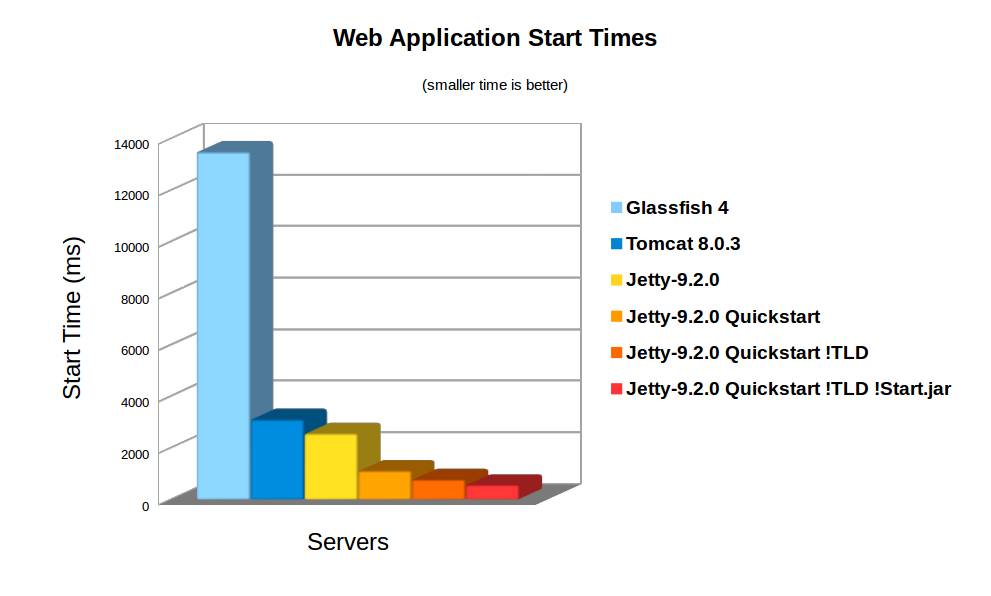

Jetty 9 Quick Start

The auto discovery features of the Servlet specification can make deployments slow and uncertain. Working in collaboration with Google AppEngine, the Jetty team has developed the Jetty quick start mechanism that allows rapid and consistent starting of a Servlet server. Google AppEngine has long used Jetty for it’s footprint and flexibility, and now fast and predictable starting is a new compelling reason to use jetty in the cloud. As Google App Engine is specifically designed with highly variable, bursty workloads in mind – rapid start up time leads directly to cost-efficient scaling.

Servlet Container Discovery Features

The last few iterations of the Servlet 3.x specification have added a lot of features related to making development easier by auto discovery and configuration of Servlets, Filters and frameworks:

Discover / From: Selected

Container

JarsWEB-INF/

classes/

*WEB-INF/

lib/

*.jarAnnotated Servlets & Filters Y Y Y web.xml fragments Y Y ServletContainerInitializers Y Y Classes discoverd by HandlesTypes Y Y Y JSP Taglib descriptors Y Y Slow Discovery

Auto discovery of Servlet configuration can be useful during the development of a webapp as it allows new features and frameworks to be enabled simply by dropping in a jar file. However, for deployment, the need to scan the contents of many jars can have a significant impact of the start time of a webapp. In the cloud, where server instances are often spun up on demand, having a slow start time can have a big impact on the resources needed.

Consider a cluster under load that determines that an extra node is desirable, then every second the new node spends scanning its own classpath for configuration is a second that the entire cluster is overloaded and giving less than optimal performance. To counter the inability to quickly bring on new instances, cluster administrators have to provision more idle capacity that can handle a burst in load while more capacity is brought on line. This extra idle capacity is carried for all time while the application is running and thus a slowly starting server increases costs over the whole application deployment.

On average server hardware, a moderate webapp with 36 jars (using spring, jersey & jackson) took over 3,158ms to deploy on Jetty 9. Now this is pretty fast and some Servlet containers (eg Glassfish) take more time to initialise their logging. However 3s is still over a reasonable thresh hold for making a user wait whilst dynamically starting an instance. Also many webapps now have over 100 jar dependencies, so scanning time can be a lot longer.

Using the standard meta-data complete option does not significantly speed up start times, as TLDs and HandlersTypes classes still must be discovered even with meta data complete. Unpacking the war file saves a little more time, but even with both of these, Jetty still takes 2,747ms to start.

Unknown Deployment

Another issue with discovery is that the exact configuration of the a webapp is not fully known until runtime. New Servlets and frameworks can be accidentally deployed if jar files are upgraded without their contents being fully investigated. This give some deployers a large headache with regards to security audits and just general predictability.

It is possible to disable some auto discovery mechanisms by using the meta-data-complete setting within web.xml, however that does not disable the scanning of HandlesTypes so it does not avoid the need to scan, nor avoid auto deployment of accidentally included types and annotations.

Jetty 9 Quickstart

From release 9.2.0 of Jetty, we have included the quickstart module that allows a webapp to be pre-scanned and preconfigured. This means that all the scanning is done prior to deployment and all configuration is encoded into an effective web.xml, called WEB-INF/quickstart-web.xml, which can be inspected to understand what will be deployed before deploying.

Not only does the quickstart-web.xml contain all the discovered Servlets, Filters and Constraints, but it also encodes as context parameters all discovered:

- ServletContainerInitializers

- HandlesTypes classes

- Taglib Descriptors

With the quickstart mechanism, jetty is able to entirely bypass all scanning and discovery modes and start a webapp in a predictable and fast way.

Using Quickstart

To prepare a jetty instance for testing the quickstart mechanism is extremely simple using the jetty module system. Firstly, if JETTY_HOME is pointing to a jetty distribution >= 9.2.0, then we can prepare a Jetty instance for a normal deployment of our benchmark webapp:

> JETTY_HOME=/opt/jetty-9.2.0.v20140526 > mkdir /tmp/demo > cd /tmp/demo > java -jar $JETTY_HOME/start.jar --add-to-startd=http,annotations,plus,jsp,deploy > cp /tmp/benchmark.war webapps/

and we can see how long this takes to start normally:

> java -jar $JETTY_HOME/start.jar 2014-03-19 15:11:18.826:INFO::main: Logging initialized @255ms 2014-03-19 15:11:19.020:INFO:oejs.Server:main: jetty-9.2.0.v20140526 2014-03-19 15:11:19.036:INFO:oejdp.ScanningAppProvider:main: Deployment monitor [file:/tmp/demo/webapps/] at interval 1 2014-03-19 15:11:21.708:INFO:oejsh.ContextHandler:main: Started o.e.j.w.WebAppContext@633a6671{/benchmark,file:/tmp/jetty-0.0.0.0-8080-benchmark.war-_benchmark-any-8166385366934676785.dir/webapp/,AVAILABLE}{/benchmark.war} 2014-03-19 15:11:21.718:INFO:oejs.ServerConnector:main: Started ServerConnector@21fd3544{HTTP/1.1}{0.0.0.0:8080} 2014-03-19 15:11:21.718:INFO:oejs.Server:main: Started @2579msSo the JVM started and loaded the core of jetty in 255ms, but another 2324 ms were needed to scan and start the webapp.

To quick start this webapp, we need to enable the quickstart module and use an example context xml file to configure the benchmark webapp to use it:

> java -jar $JETTY_HOME/start.jar --add-to-startd=quickstart > cp $JETTY_HOME/etc/example-quickstart.xml webapps/benchmark.xml > vi webapps/benchmark.xml

The benchmark.xml file should be edited to point to the benchmark.war file:

<?xml version="1.0" encoding="ISO-8859-1"?> <!DOCTYPE Configure PUBLIC "-//Jetty//Configure//EN" "http://www.eclipse.org/jetty/configure_9_0.dtd"> <Configure class="org.eclipse.jetty.quickstart.QuickStartWebApp"> <Set name="autoPreconfigure">true</Set> <Set name="contextPath">/benchmark</Set> <Set name="war"><Property name="jetty.webapps" default="."/>/benchmark.war</Set> </Configure>

Now the next time the webapp is run, it will be preconfigured (taking a little bit longer than normal start):

> java -jar $JETTY_HOME/start.jar 2014-03-19 15:21:16.442:INFO::main: Logging initialized @237ms 2014-03-19 15:21:16.624:INFO:oejs.Server:main: jetty-9.2.0.v20140526 2014-03-19 15:21:16.642:INFO:oejdp.ScanningAppProvider:main: Deployment monitor [file:/tmp/demo/webapps/] at interval 1 2014-03-19 15:21:16.688:INFO:oejq.QuickStartWebApp:main: Quickstart Extract file:/tmp/demo/webapps/benchmark.war to file:/tmp/demo/webapps/benchmark 2014-03-19 15:21:16.733:INFO:oejq.QuickStartWebApp:main: Quickstart preconfigure: o.e.j.q.QuickStartWebApp@54318a7a{/benchmark,file:/tmp/demo/webapps/benchmark,null}(war=file:/tmp/demo/webapps/benchmark.war,dir=file:/tmp/demo/webapps/benchmark) 2014-03-19 15:21:19.545:INFO:oejq.QuickStartWebApp:main: Quickstart generate /tmp/demo/webapps/benchmark/WEB-INF/quickstart-web.xml 2014-03-19 15:21:19.879:INFO:oejsh.ContextHandler:main: Started o.e.j.q.QuickStartWebApp@54318a7a{/benchmark,file:/tmp/demo/webapps/benchmark,AVAILABLE} 2014-03-19 15:21:19.893:INFO:oejs.ServerConnector:main: Started ServerConnector@63acac21{HTTP/1.1}{0.0.0.0:8080} 2014-03-19 15:21:19.894:INFO:oejs.Server:main: Started @3698msAfter preconfiguration, on all subsequent starts it will be quick started:

> java -jar $JETTY_HOME/start.jar 2014-03-19 15:21:26.069:INFO::main: Logging initialized @239ms 2014-03-19 15:21:26.263:INFO:oejs.Server:main: jetty-9.2.0-SNAPSHOT 2014-03-19 15:21:26.281:INFO:oejdp.ScanningAppProvider:main: Deployment monitor [file:/tmp/demo/webapps/] at interval 1 2014-03-19 15:21:26.941:INFO:oejsh.ContextHandler:main: Started o.e.j.q.QuickStartWebApp@559d6246{/benchmark,file:/tmp/demo/webapps/benchmark/,AVAILABLE}{/benchmark/} 2014-03-19 15:21:26.956:INFO:oejs.ServerConnector:main: Started ServerConnector@4a569e9b{HTTP/1.1}{0.0.0.0:8080} 2014-03-19 15:21:26.956:INFO:oejs.Server:main: Started @1135msSo quickstart has reduced the start time from 3158ms to approx 1135ms!

More over, the entire configuration of the webapp is visible in webapps/benchmark/WEB-INF/quickstart-web.xml. This file can be examine and all the deployed elements can be easily audited.

Starting Faster!

Avoiding TLD scans

The jetty 9.2 distribution switched to using the apache Jasper JSP implementation from the glassfish JSP engine. Unfortunately this JSP implementation will always scan for TLDs, which turns out takes a significant time during startup. So we have modified the standard JSP initialisation to skip TLD parsing altogether if the JSPs have been precompiled.

To let the JSP implementation know that all JSPs have been precompiled, a context attribute needs to be set in web.xml:

<context-param> <param-name>

org.eclipse.jetty.jsp. precompiled</param-name> < param-value>true</param-value> </context-param> This is done automagically if you use the Jetty Maven JSPC plugin. Now after the first run, the webapp starts in 797ms:

> java -jar $JETTY_HOME/start.jar 2014-03-19 15:30:26.052:INFO::main: Logging initialized @239ms 2014-03-19 15:30:26.245:INFO:oejs.Server:main: jetty-9.2.0.v20140526 2014-03-19 15:30:26.260:INFO:oejdp.ScanningAppProvider:main: Deployment monitor [file:/tmp/demo/webapps/] at interval 1 2014-03-19 15:30:26.589:INFO:oejsh.ContextHandler:main: Started o.e.j.q.QuickStartWebApp@414fabe1{/benchmark,file:/tmp/demo/webapps/benchmark/,AVAILABLE}{/benchmark/} 2014-03-19 15:30:26.601:INFO:oejs.ServerConnector:main: Started ServerConnector@50473913{HTTP/1.1}{0.0.0.0:8080} 2014-03-19 15:30:26.601:INFO:oejs.Server:main: Started @797msBypassing start.jar

The jetty start.jar is a very powerful and flexible mechanism for constructing a classpath and executing a configuration encoded in jetty XML format. However, this mechanism does take some time to build the classpath. The start.jar mechanism can be bypassed by using the –dry-run option to generate and reuse a complete command line to start jetty:

> RUN=$(java -jar $JETTY_HOME/start.jar --dry-run) > eval $RUN 2014-03-19 15:53:21.252:INFO::main: Logging initialized @41ms 2014-03-19 15:53:21.428:INFO:oejs.Server:main: jetty-9.2.0.v20140526 2014-03-19 15:53:21.443:INFO:oejdp.ScanningAppProvider:main: Deployment monitor [file:/tmp/demo/webapps/] at interval 1 2014-03-19 15:53:21.761:INFO:oejsh.ContextHandler:main: Started o.e.j.q.QuickStartWebApp@7a98dcb{/benchmark,file:/tmp/demo/webapps/benchmark/,AVAILABLE}{file:/tmp/demo/webapps/benchmark/} 2014-03-19 15:53:21.775:INFO:oejs.ServerConnector:main: Started ServerConnector@66ca206e{HTTP/1.1}{0.0.0.0:8080} 2014-03-19 15:53:21.776:INFO:oejs.Server:main: Started @582msClassloading?

With the quickstart mechanism, the start time of the jetty server is dominated by classloading, with over 50% of the CPU being profiled within URLClassloader, and the next hot spot is 4% in XML Parsing. Thus a small gain could be made by pre-parsing the XML into byte code calls, but any more significant progress will probably need examination of the class loading mechanism itself. We have experimented with combining all classes to a single jar or a classes directory, but with no further gains.

Conclusion

The Jetty-9 quick start mechanism provides almost an order of magnitude improvement is start time. This allows fast and predictable deployment, making Jetty the ideal server to be used in dynamic clouds such as Google App Engine.

-

Jetty 9 Modules and Base

Jetty has always been a highly modular project, which can be assembled in an infinite variety of ways to provide exactly the server/client/container that you required. With the recent Jetty 9.1 releases, we have added some tools to greatly improve the usability of configuring the modules used by a server.

Jetty now has the concept of a jetty home directory, where the standard distribution is installed, and a jetty base directory, which contains just the configuration of a specific instance of jetty. With this division, updating to a new version of jetty can be as simple as just updating the reference to jetty home and all the configuration in jetty base will apply.

Defining a jetty home is trivial. You simply need to unpack the jetty distribution. For this demonstration, we’ll also define an environment variable:

export JETTY_HOME=/opt/jetty-9.1.3.v20140225/

Creating a jetty base is also easy, as it is just a matter of creating a directory and using the jetty start.jar tools to enable the modules that you want. For example to create a jetty base with a HTTP and SPDY connector, JMX and a deployment manager (to scan webapps directory), you can do:

mkdir /tmp/demo cd /tmp/demo java -jar $JETTY_HOME/start.jar --add-to-startd=http,spdy,jmx,deploy

You now have a jetty base defining a simple server ready to run. Note that all the required directories have been created and additional dependencies needed for SPDY are downloaded:

demo ├── etc │ └── keystore ├── lib │ └── npn ├── start.d │ ├── deploy.ini │ ├── http.ini │ ├── jmx.ini │ ├── npn.ini │ ├── server.ini │ ├── spdy.ini │ └── ssl.ini └── webapps

All you need do is copy in your webapp and start the server:

cp ~/somerepo/async-rest.war webapps/java -jar $JETTY_HOME/start.jar

You can see details of the modules available and the current configuration using the commands:

java -jar $JETTY_HOME/start.jar --list-modules java -jar $JETTY_HOME/start.jar --list-confi

You can see a full demonstration of the jetty base and module capabilities in the following webinar:

Note also that with the Jetty 9.0.4, modules will be available to replace the glassfish versions of JSP and JSTL with the apache release.