So you are not Google! Your website is only taking a few 10’s or maybe 100’s of requests a second and your current server is handling it without a blip. So you think you don’t need a faster server and it’s only something you need to consider when you have 10,000 or more simultaneous users! WRONG! All websites need to be concerned about speed in one form or another and this blog explains why and how Jetty with SPDY can help improve your business no matter how large or small you are!

Speed is Relative

What does it mean to say your web site is fast? There are many different ways of measuring speed and while some websites are concerned with all of them, many if not most need only be concerned with some aspects of speed.

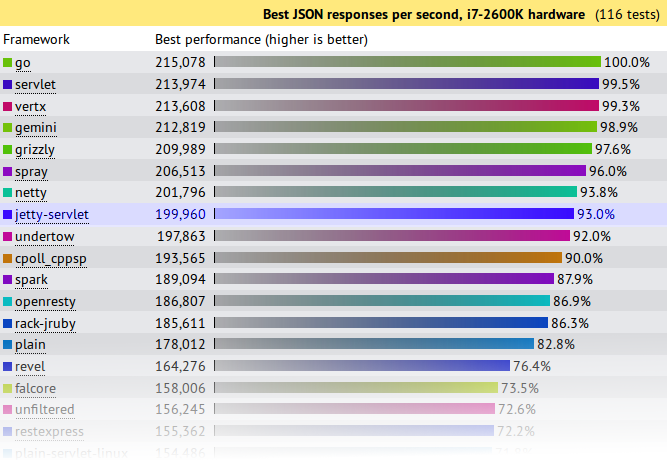

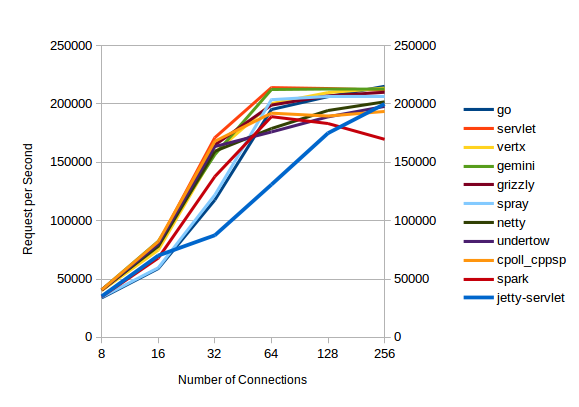

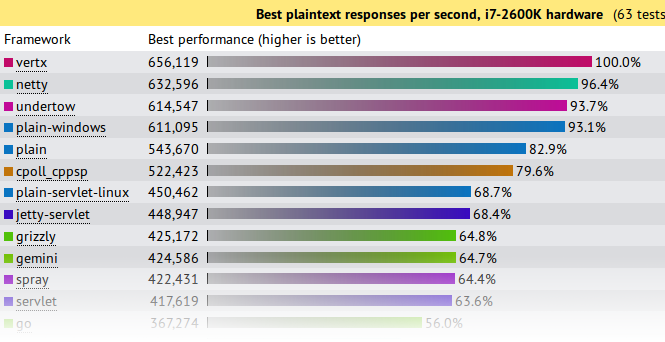

Requests per Second

The first measure of speed that many web developers think about is throughput, or how many requests per second can your web site handle? For large web business with millions of users this is indeed a very important measure, but for many/most websites, requests per second is just not an issue. Most servers will be able to handle thousands of requests per second, which represents 10’s of thousands of simultaneous users and far exceeds the client base and/or database transaction capacity of small to medium enterprises. Thus having a server and/or protocol that will allow even greater requests per second is just not a significant concern for most [ But if it is, then Jetty is still the server for you, but just not for the reasons this blog explains] .

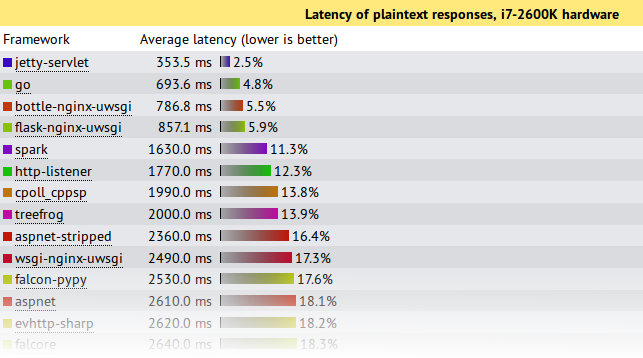

Request Latency

Another speed measure is request latency, which is the time it takes a server to parse a request and generate a response. This can range from a few milliseconds to many seconds depending on the type of the request and complexity of the application. It can be a very important measure for some websites, specially web service or REST style servers that handling a transaction per message. But as an individual measure it is dominated by network latency (10-500 ms) and application processing (1-30000ms), then the time the server spends (1-5ms) handling a request/response is typically not an important driver when selecting a server.

Page Load Speed

The speed measure that is most apparent to users of your website is how long a page takes to load. For a typical website, this involves fetching on average 85 resources (HTML, images, CSS, javascript, etc.) in many HTTP requests over multiple connections. Study summaries below, show that page load time is a metric that can greatly affect the effectiveness of a web site. Page load times have typically been primarily influenced by page design and the server had little ability to speed up page loads. But with the SPDY protocol, there are now ways to greatly improve page load time, which we will see is a significant business advantage regardless of the size of your website and client base.

The Business case for Page Load Speed

The Book Of Speed presents the business benefits of reduced page load speed as determined by many studies summaries below:

- A study at Microsofts live.com found that slowing page loads by 500ms reduced revenue per user by 1.2%. This increased to 2.8% at 1000ms delay and 4.3% at 2000ms, mostly because of a reduced click through rate.

- Google found that the negative effect on business of slow pages got worse the longer users were exposed to a slow site.

- Yahoo found that a slowdown of 400ms was enough to drop the completed page loads by between 5% and 9%. So users were clicking away from the page rather than waiting for it to load.

- AOL’s studied several of its web properties and found a strong correlation between page load time and the number of page view per user visit. Faster sites retained their visitors for more pages.

- When Mozilla improved the speed of their Internet Explorer landing page by 2.2s, they increase their rate of conversions by 15.4%

- Shopzilla reduce their page loads from 6s to 1.2s and increased their sales conversion by 7-12% and also reduced their operation costs due to reduced infrastructure needs.

These studies clearly show that page load speed should be a significant consideration for all web based businesses and they are backed up by many more such as:

- Akamai reveals 2s as the Threshold of Acceptability.

- Gomez Why Web Performance Matters

- QuBit estimate £1.73bn lost in global sales each year due to slow loading speeds.

- Speedprofs reveal the ROI of speed.

If that was not enough, Google have also confirmed that they use page load speed as one of the key factors when ranking search results to display. Thus a slow page can do double damage of reducing the users that visit and reducing the conversion rate of those that do.

Hopefully you are getting the message now, that page load speed is very important and the sooner you do something about it, the better it will be. So what can you do about it?

Web Optimization

The traditional approach to improving has been to look at Web Performance Optimization, to improve the structure and technical implementation of your web pages using techniques including:

- Cache Control

- GZip components

- Component ordering

- Combine multiple CSS and javascript components

- Minify CSS and javascript

- Inline images, CSS Sprites and image maps

- Content Delivery Networks

- Reduce DOM elements in documents

- Split content over domains

- Reduce cookies

These are all great things to do and many will provide significant speed ups. However, most of these techniques are very intrusive and can be at odds with good software engineer; development speed and separation of concerns between designers and developers. It can be a considerable disruption to a development effort to put in aggressive optimization goals along side functionality, design and time to market concerns.

SPDY for Page Load Speed

The SPDY protocol is being developed primarily by Google to replace HTTP with a particular focus on improving page load latency. SPDY is already deployed on over 50% of browsers and is the basis of the first draft of the HTTP/2.0 specification being developed by the IETF. Jetty was the first java server to implement SPDY and Jetty-9 has been re-architected specifically to better handle the multi protocol, TLS, push and multiplexing features of SPDY.

Most importantly, because SPDY is an improvement in the network transport layer, it can greatly improve page load times without making any changes at all to a web application. It is entirely transparent to the web developers and does not intrude into the design or development!

SPDY Multiplexing

One of the biggest contributors to web page load latency is the inability of the HTTP to efficiently use connection. A HTTP connection can have only 1 outstanding request and browsers have a low limit (typically 6) to the number of connections that can be used in parallel. This means that if your page requires 85 resources to render (which is the average), it can only fetch them 6 at a time and it will take at least 14 round trips over the network before the page is rendered. With network round trip time often hundreds of ms, this can add seconds to page load times!

SPDY resolves this issue by supporting multiplexed requests over a single connection with no limit on the number of parallel requests. Thus if a page needs 85 resources to load, SPDY allows all 85 to be requested in parallel and thus only a single round trip latency imposed and content can be delivered at the network capacity.

More over, because the single connection is used and reused, then the TCP/IP slow start window is rapidly expanded and the effective network capacity available to the browser is thus increased.

SPDY Push

Multiplexing is key to reducing round trips, but unfortunately it cannot remove them all because browser has to receive and parse the HTML before it knows the CSS resources to fetch; and those CSS resources have to be fetched and parsed before any image links in them are known and fetch. Thus even with multiplexing, a page might take 2 or 3 network round trips just to identify all the resources associated with a page.

But SPDY has another trick up it’s sleeve. It allows a server to push resources to a browser in anticipation of requests that might come. Jetty was the first server to implement this mechanism and uses relationships learnt from previous requests to create a map of associated resources so that when a page is requested, all it’s associated resources can immediately be pushed and no additional network round trips are incurred.

SPDY Demo

The following demonstration was given and Java One 2012 and clearly shows the SPDY page load latency improvements for a simple page with 25 images blocks over a simulated 200ms network:

How do I get SPDY?

To get the business benefits of speed for your web application, you simply need to deploy it on Jetty and enable SPDY with an SSL Certificate for your site. Standard java web applications can be deployed without modification on Jetty and there are simple solutions to run sites built with PHP, Ruby, GWT etc on Jetty as well.

If you want assistance setting up Jetty and SPDY, why not look at the affordable Jetty Migration Services available from Intalio.com and get the Jetty experts help power your web site.