Greg Wilkins gave the following session at JavaOne 2014 about Servlet 3.1 Async I/O.

It’s a great talk in many ways.

You get to know from an insider of the Servlet Expert Group about the design of the Servlet 3.1 Async I/O APIs.

You get to know from the person the created, developed Jetty and implemented Servlet specifications for 19 years what are the gotchas of these new APIs.

You get to know many great insights on how to write correct asynchronous code, and believe it – it’s not as straightforward as you think !

You get to know that Jetty 9 supports Servlet 3.1 and you should start using it today 🙂

Enjoy !

Category: Asynchronous

-

JavaOne 2014 Servlet 3.1 Async I/O Session

-

Jetty @ JavaOne 2014

I’ll be attending JavaOne Sept 29 to Oct 1 and will be presenting several talks on Jetty:

- CON2236 Servlet Async IO: I’ll be looking at the servlet 3.1 asynchronous IO API and how to use it for scale and low latency. Also covers a little bit about how we are using it with http2. There is an introduction video but the talk will be a lot more detailed and hopefully interesting.

- BOF2237 Jetty Features: This will be a free form two way discussion about new features in jetty and it’s future direction: http2, modules, admin consoles, dockers etc. are all good topics for discussion.

- CON5100 Java in the Cloud: This is primarily a Google session, but I’ve been invited to present the work we have done improving the integration of Jetty into their cloud offerings.

I’ll be in the Bay area from the 23rd and I’d be really pleased to meet up with Jetty users in the area before or during the conference for anything from an informal chat/drink/coffee up to the full sales pitch of Intalio|Webtide services – or even both!) – <gregw@intalio.com>

- CON2236 Servlet Async IO: I’ll be looking at the servlet 3.1 asynchronous IO API and how to use it for scale and low latency. Also covers a little bit about how we are using it with http2. There is an introduction video but the talk will be a lot more detailed and hopefully interesting.

-

Jetty-9 Iterating Asynchronous Callbacks

While Jetty has internally used asynchronous IO since 7.0, Servlet 3.1 has added asynchronous IO to the application API and Jetty-9.1 now supports asynchronous IO in an unbroken chain from application to socket. Asynchronous APIs can often look intuitively simple, but there are many important subtleties to asynchronous programming and this blog looks at one important pattern used within Jetty. Specifically we look at how an iterating callback pattern is used to avoid deeps stacks and unnecessary thread dispatches.

Asynchronous Callback

Many programmers wrongly believe that asynchronous programming is about Futures. However Futures are a mostly broken abstraction and could best be described as a deferred blocking API rather than an Asynchronous API. True asynchronous programming is about callbacks, where the asynchronous operation calls back the caller when the operation is complete. A classic example of this is the NIO AsynchronousByteChannel write method:

<A> void write(ByteBuffer src, A attachment, CompletionHandler<Integer,? super A> handler); public interface CompletionHandler<V,A> { void completed(V result, A attachment); void failed(Throwable exc, A attachment); }

With an NIO asynchronous write, a CompletionHandler instance is pass that is called back once the write operation has completed or failed. If the write channel is congested, then no calling thread is held or blocked whilst the operation waits for the congestion to clear and the callback will be invoked by a thread typically taken from a thread pool.

The Servlet 3.1 Asynchronous IO API is syntactically very different, but semantically similar to NIO. Rather than have a callback when a write operation has completed the API has a WriteListener API that is called when a write operation can proceed without blocking:

public interface WriteListener extends EventListener { public void onWritePossible() throws IOException; public void onError(final Throwable t); }Whilst this looks different to the NIO write CompletionHandler, effectively a write is possible only when the previous write operation has completed, so the callbacks occur on essentially the same semantic event.

Callback Threading Issues

So that asynchronous callback concept looks pretty simple! How hard could it be to implement and use! Let’s consider an example of asynchronously writing the data obtained from an InputStream. The following WriteListener can achieve this:

public class AsyncWriter implements WriteListener { private InputStream in; private ServletOutputStream out; private AsyncContext context; public AsyncWriter(AsyncContext context, InputStream in, ServletOutputStream out) { this.context=context; this.in=in; this.out=out; } public void onWritePossible() throws IOException { byte[] buf = new byte[4096]; while(out.isReady()) { int l=in.read(buf,0,buf.length); if (l<0) { context.complete(); return; } out.write(buf,0,l); } } ... }Whenever a write is possible, this listener will read some data from the input and write it asynchronous to the output. Once all the input is written, the asynchronous Servlet context is signalled that the writing is complete.

However there are several key threading issues with a WriteListener like this from both the caller and callee’s point of view. Firstly this is not entirely non blocking, as the read from the input stream can block. However if the input stream is from the local file system and the output stream is to a remote socket, then the probability and duration of the input blocking is much less than than of the output, so this is substantially non-blocking asynchronous code and thus is reasonable to include in an application. What this means for asynchronous operations providers (like Jetty), is that you cannot trust any code you callback to not block and thus you cannot use an important thread (eg one iterating over selected keys from a Selector) to do the callback, else an application may inadvertently block other tasks from proceeding. Thus Asynchronous IO Implementations thus must often dispatch a thread to perform a callback to application code.

Because dispatching threads is expensive in both CPU and latency, Asynchronous IO implementations look for opportunities to optimise away thread dispatches to callbacks. There Servlet 3.1 API has by design such an optimisation with the out.isReady() call that allows iteration of multiple operations within the one callback. A dispatch to onWritePossible only happens when it is required to avoid a blocking write and often many write iterations can proceed within a single callback. An NIO CompletionHandler based implementation of the same task is only able to perform one write operation per callback and must wait for the invocation of the complete handler for that operation before proceeding:

public class AsyncWriter implements CompletionHandler<Integer,Void> { private InputStream in; private AsynchronousByteChannel out; private CompletionHandler<Void,Void> complete; private byte[] buf = new byte[4096]; public AsyncWriter(InputStream in, AsynchronousByteChannel out, CompletionHandler<Void,Void> complete) { this.in=in; this.out=out; this.complete=complete; completed(0,null); } public void completed(Integer w,Void a) throws IOException { int l=in.read(buf,0,buf.length); if (l<0) complete.completed(null,null); else out.write(ByteBuffer.wrap(buf,0,l),this); } ... }Apart from an unrelated significant bug (left as an exercise for the reader to find), this version of the AsyncWriter has a significant threading challenge. If the write can trivially completes without blocking, should the callback to CompletionHandler be dispatched to a new thread or should it just be called from the scope of the write using the caller thread? If a new thread is always used, then many many dispatch delays will be incurred and throughput will be very low. But if the callback is invoked from the scope of the write call, then if the callback does a re-entrant call to write, it may call a callback again which calls write again etc. etc. and a very deep stack will result and often a stack overflow can occur.

The JVM’s implementation of NIO resolves this dilemma by doing both! It performs the callback in the scope of the write call until it detects a deep stack, at which time it dispatches the callback to a new thread. While this does work, I consider it a little bit of the worst of both worlds solution: you get deep stacks and you get dispatch latency. Yet it is an accepted pattern and Jetty-8 uses this approach for callbacks via our ForkInvoker class.

Jetty-9 IO Callbacks

For Jetty-9, we wanted the best of all worlds. We wanted to avoid deep re entrant stacks and to avoid dispatch delays. In a similar way to Servlet 3.1 WriteListeners, we wanted to substitute iteration for reentrancy when ever possible. Thus Jetty does not use NIO asynchronous IO channel APIs, but rather implements our own asynchronous IO pattern using the NIO Selector to implement our own EndPoint abstraction and a simple Callback interface:

public interface EndPoint extends Closeable { ... void write(Callback callback, ByteBuffer... buffers) throws WritePendingException; ... } public interface Callback { public void succeeded(); public void failed(Throwable x); }One key feature of this API is that it supports gather writes, so that there is less need for either iteration or re-entrancy when writing multiple buffers (eg headers, chunk and/or content). But other than that it is semantically the same as the NIO CompletionHandler and if used incorrectly could also suffer from deep stacks and/or dispatch latency.

Jetty Iterating Callback

Jetty’s technique to avoid deep stacks and/or dispatch latency is to use the IteratingCallback class as the basis of callbacks for tasks that may take multiple IO operations:

public abstract class IteratingCallback implements Callback { protected enum State { IDLE, SCHEDULED, ITERATING, SUCCEEDED, FAILED }; private final AtomicReference<State> _state = new AtomicReference<>(State.IDLE); abstract protected void completed(); abstract protected State process() throws Exception; public void iterate() { while(_state.compareAndSet(State.IDLE,State.ITERATING)) { State next = process(); switch (next) { case SUCCEEDED: if (!_state.compareAndSet(State.ITERATING,State.SUCCEEDED)) throw new IllegalStateException("state="+_state.get()); completed(); return; case SCHEDULED: if (_state.compareAndSet(State.ITERATING,State.SCHEDULED)) return; continue; ... } } public void succeeded() { loop: while(true) { switch(_state.get()) { case ITERATING: if (_state.compareAndSet(State.ITERATING,State.IDLE)) break loop; continue; case SCHEDULED: if (_state.compareAndSet(State.SCHEDULED,State.IDLE)) iterate(); break loop; ... } } }IteratingCallback is itself an example of another pattern used extensively in Jetty-9: it is a lock-free atomic state machine implemented with an AtomicReference to an Enum. This pattern allows very fast and efficient lock free thread safe code to be written, which is exactly what asynchronous IO needs.

The IteratingCallback class iterates on calling the abstract

process()method until such time as it returns the SUCCEEDED state to indicate that all operations are complete. If theprocess()method is not complete, it may return SCHEDULED to indicate that it has invoked an asynchronous operation (such asEndPoint.write(...)) and passed the IteratingCallback as the callback.Once scheduled, there are two possible outcomes for a successful operation. In the case that the operations completed trivially it will have called back

succeeded()within the scope of the write, thus the state will have been switched from ITERATING to IDLE so that the while loop in iterate will fail to set the SCHEDULED state and continue to switch from IDLE to ITERATING, thus callingprocess()again iteratively.In the case that the schedule operation does not complete within the scope of process, then the iterate while loop will succeed in setting the SCHEDULED state and break the loop. When the IO infrastructure subsequently dispatches a thread to callback

succeeded(), it will switch from SCHEDULED to IDLE state and itself call theiterate()method to continue to iterate on callingprocess().Iterating Callback Example

A simplified example of using an IteratingCallback to implement the AsyncWriter example from above is given below:

private class AsyncWriter extends IteratingCallback { private final Callback _callback; private final InputStream _in; private final EndPoint _endp; private final ByteBuffer _buffer; public AsyncWriter(InputStream in,EndPoint endp,Callback callback) { _callback=callback; _in=in; _endp=endp; _buffer = BufferUtil.allocate(4096); } protected State process() throws Exception { int l=_in.read(_buffer.array(), _buffer.arrayOffset(), _buffer.capacity()); if (l<0) { _callback.succeeded(); return State.SUCCEEDED; } _buffer.position(0); _buffer.limit(len); _endp.write(this,_buffer); return State.SCHEDULED; }Several production quality examples of IteratingCallbacks can be seen in the Jetty HttpOutput class, including a real example of asynchronously writing data from an input stream.

Conclusion

Jetty-9 has had a lot of effort put into using efficient lock free patterns to implement a high performance scalable IO layer that can be seamlessly extended all the way into the servlet application via the Servlet 3.1 asynchronous IO. Iterating callback and lock free state machines are just some of the advanced techniques Jetty is using to achieve excellent scalability results.

-

Jetty 9.1 in Techempower benchmarks

Jetty 9.1.0 has entered round 8 of the Techempower’s Web Framework Benchmarks. These benchmarks are a comparison of over 80 framework & server stacks in a variety of load tests. I’m the first one to complain about unrealistic benchmarks when Jetty does not do well, so before crowing about our good results I should firstly say that these benchmarks are primarily focused at frameworks and are unrealistic benchmarks for server performance as they suffer from many of the failings that I have highlighted previously (see Truth in Benchmarking and Lies, Damned Lies and Benchmarks).

But I don’t want to bury the lead any more than I have already done, so I’ll firstly tell you how Jetty did before going into detail about what we did and what’s wrong with the benchmarks.

What did Jetty do?

Jetty has initially entered the JSON and Plaintext benchmarks:

- Both tests are simple requests and trivial requests with just the string “Hello World” encode either as JSON or plain text.

- The JSON test has a maximum concurrency of 256 connections with zero delay turn around between a response and the next request.

- The plaintext test has a maximum concurrency of 16,384 and uses pipelining to run these connections at what can only be described as a pathological work load!

How did Jetty go?

At first glance at the results, Jetty look to have done reasonably well, but on deeper analysis I think we did awesomely well and an argument can be made that Jetty is the only server tested that has demonstrated truly scalable results.

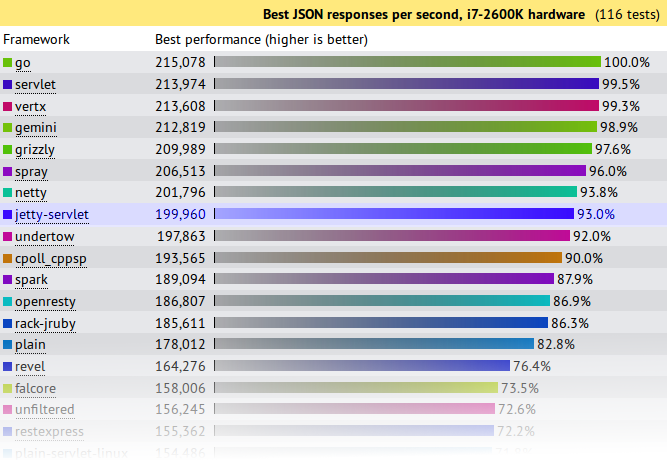

JSON Results

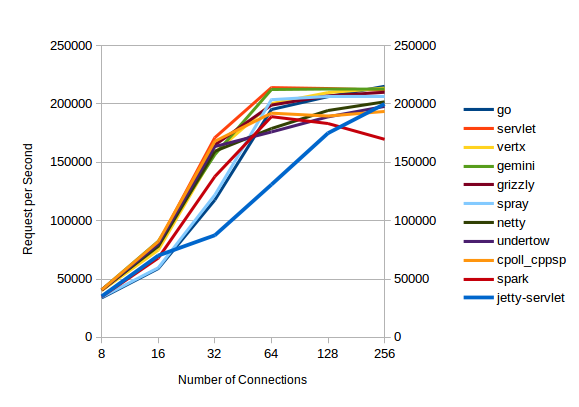

Jetty came 8th from 107 and achieved 93% (199,960 req/s) of the first place throughput. A good result for Jetty, but not great. . . . until you plot out the results vs concurrency:

All the servers with high throughputs have essentially maxed out at between 32 and 64 connections and the top servers are actually decreasing their throughput as concurrency scales from 128 to 256 connections.

Of the top throughput servlets, it is only Jetty that displays near linear throughput growth vs concurrency and if this test had been extended to 512 connections (or beyond) I think you would see Jetty coming out easily on top. Jetty is investing a little more per connection, so that it can handle a lot more connections.

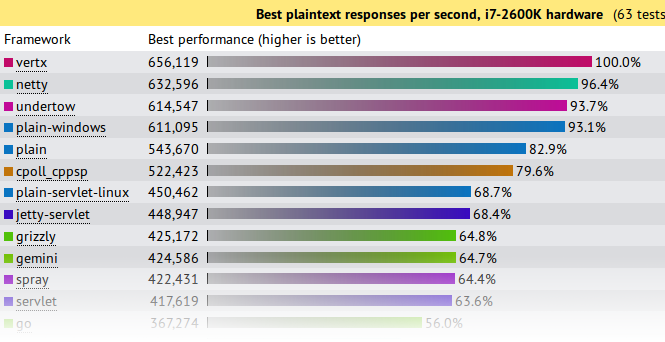

Plaintext Results

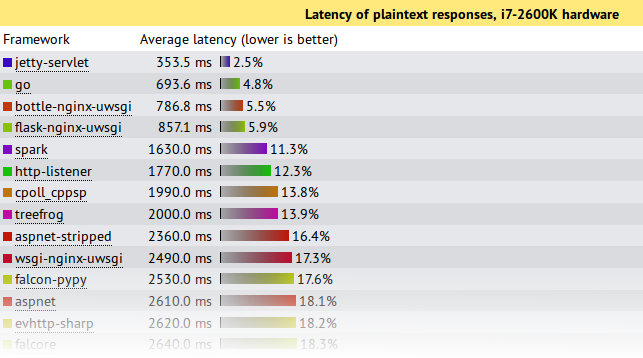

First glance again is not so great and we look like we are best of the rest with only 68.4% of the seemingly awesome 600,000+ requests per second achieved by the top 4. But throughput is not the only important metric in a benchmark and things look entirely different if you look at the latency results:

This shows that under this pathological load test, Jetty is the only server to send responses with an acceptable latency during the onslaught. Jetty’s 353.5ms is a workable latency to receive a response, while the next best of 693ms is starting to get long enough for users to register frustration. All the top throughput servers have average latencies of 7s or more!, which is give up and go make a pot of coffee time for most users, specially as your average web pages needs >10 requests to display!

Note also that these test runs were only over 15s, so servers with 7s average latency were effectively not serving any requests until the onslaught was over and then just sent all the responses in one great big batch. Jetty is the only server to actually make a reasonable attempt at sending responses during the period that a pathological request load was being received.

If your real world load is anything vaguely like this test, then Jetty is the only server represented in the test that can handle it!

What did Jetty do?

The jetty entry into these benchmarks has done nothing special. It is out of the box configuration with trivial implementations based on the standard servlet API. More efficient internal Jetty API have not been used and there has been no fine tuning of the configuration for these tests. The full source is available, but is presented in summary below:

public class JsonServlet extends GenericServlet { private JSON json = new JSON(); public void service(ServletRequest req, ServletResponse res) throws ServletException, IOException { HttpServletResponse response= (HttpServletResponse)res; response.setContentType("application/json"); Map<String,String> map = Collections.singletonMap("message","Hello, World!"); json.append(response.getWriter(),map); } }The JsonServlet uses the Jetty JSON mapper to convert the trivial instantiated map required of the tests. Many of the other frameworks tested use Jackson which is now marginally faster than Jetty’s JSON, but we wanted to have our first round with entirely Jetty code.

public class PlaintextServlet extends GenericServlet { byte[] helloWorld = "Hello, World!".getBytes(StandardCharsets.ISO_8859_1); public void service(ServletRequest req, ServletResponse res) throws ServletException, IOException { HttpServletResponse response= (HttpServletResponse)res; response.setContentType(MimeTypes.Type.TEXT_PLAIN.asString()); response.getOutputStream().write(helloWorld); } }The PlaintextServlet makes a concession to performance by pre converting the string array to bytes, which is then simply written out the output stream for each response.

public final class HelloWebServer { public static void main(String[] args) throws Exception { Server server = new Server(8080); ServerConnector connector = server.getBean(ServerConnector.class); HttpConfiguration config = connector.getBean(HttpConnectionFactory.class).getHttpConfiguration(); config.setSendDateHeader(true); config.setSendServerVersion(true); ServletContextHandler context = new ServletContextHandler(ServletContextHandler.NO_SECURITY|ServletContextHandler.NO_SESSIONS); context.setContextPath("/"); server.setHandler(context); context.addServlet(org.eclipse.jetty.servlet.DefaultServlet.class,"/"); context.addServlet(JsonServlet.class,"/json"); context.addServlet(PlaintextServlet.class,"/plaintext"); server.start(); server.join(); } }The servlets are run by an embedded server. The only configuration done to the server is to enable the headers required by the test and all other settings are the out-of-the-box defaults.

What’s wrong with the Techempower Benchmarks?

While Jetty has been kick-arse in these benchmarks, let’s not get carried away with ourselves because the tests are far from perfect, specially for these two tests which are not testing framework performance (the primary goal of the techempower benchmarks) :

- Both have simple requests that have no information in them that needs to be parsed other than a simple URL. Realistic web loads often have session and security cookies as well as request parameters that need to be decoded.

- Both have trivial responses that are just the string “Hello World” with minimal encoding. Realistic web load would have larger more complex responses.

- The JSON test has a maximum concurrency of 256 connections with zero delay turn around between a response and the next request. Realistic scalable web frameworks must deal with many more mostly idle connections.

- The plaintext test has a maximum concurrency of 16,384 (which is a more realistic challenge), but uses pipelining to run these connections at what can only be described as a pathological work load! Pipelining is rarely used in real deployments.

- The tests appear to run only for 15s. This is insufficient time to reach steady state and it is no good your framework performing well for 15s if it is immediately hit with a 10s garbage collection starting on the 16th second.

But let me get off my benchmarking hobby-horse, as I’ve said it all before: Truth in Benchmarking, Lies, Damned Lies and Benchmarks.

What’s good about the Techempower Benchmarks?

- There are many frameworks and servers in the comparison and whatever the flaws are, then are the same for all.

- The test appear to be well run on suitable hardware within a controlled, open and repeatable process.

- Their primary goal is to test core mechanism of web frameworks, such as object persistence. However, jetty does not provide direct support for such mechanisms so we have initially not entered all the benchmarks.

Conclusion

Both the JSON and plaintext tests are busy connection tests and the JSON test has only a few connections. Jetty has always prioritized performance for the more realistic scenario of many mostly idle connections and this has shown that even under pathological loads, jetty is able to fairly and efficiently share resources between all connections.

Thus it is an impressive result that even when tested far outside of it’s comfort zone, Jetty-9.1.0 has performed at the top end of this league table and provided results that if you look beyond the headline throughput figures, presents the best scalability results. While the tested loads are far from realistic, the results do indicate that jetty has very good concurrency and low contention.

Finally remember that this is a .0 release aimed at delivering the new features of Servlet 3.1 and we’ve hardly even started optimizing jetty 9.1.x