The HTTP2 protocol has been submitted on the next stage to becoming an internet standard, the last call to the IESG. Some feedback has been highly critical, and has sparked its own lengthy feedback. I have previously given my own critical feedback and the WG last call, but since then the protocol has improved a little and my views have moderated a touch. However, I still have significant reservations as expressed in my lastest feedback and reproduced below. However, the Jetty implementation of this protocol is well advanced in our master branch and will soon be released with Jetty 9.3.0.

IESG Last Call Feedback

The HTTP/2 proposal describes a protocol that has some significant benefits over HTTP/1 and considering it’s current deployment status, should be progress to an RFC more or less as is. However, the IESG should know that it is a technical compromise that fails to meet some significant aspects of it’s charter.

I and others have discussed in the WG at length about what we see as technical problems. These issues were given fair and repeated consideration by the WG (albeit somewhat hurried on a schedule that was not really apparent why it existed). The resulting document does represent a very rough consensus of the active participants, which is to the credit of the chair who had to deal with a deeply divided WG. But the IESG should note that this also means that there are many parts of the protocol that are border line “can’t live with” for many participants. It is not an exemplar of technical excellence.

I believe that this is the result of a charter that sets up opposing goals with little guidance on how to balance them. Thus the core of my feedback to this LC is not to iterate past technical discussions, but rather to draw the IESG attention to the parts of the charter that are not well met.

The httpbis charter for http2 begins be defining why HTTP/1 should be replaced (my emphasis):

There is emerging implementation experience and interest in a protocol that retains the semantics of HTTP without the legacy of HTTP/1.x message framing and syntax, which have been identified as hampering performance and encouraging misuse of the underlying transport.

Some examples of the protocol misuse include:

- breaking the 2 connection limit by clients in order to reduce request latency and to maximise throughput via the utilisation of multiple flow control windows.

- use of long polling patterns to establish two way communication between client and server.

- use of the upgrade mechanism to replace the HTTP semantic with websocket semantic has been described by some as a misappropriation of ports 80/443 for an alternative semantic.

I believe that the emphasis on performance (specifically browser “end-user perceived latency”, which is called out by the charter) has prevented the draft from significantly addressing the misuse goal of the charter. This emphasis on efficiency over other design aspects was well characterised by the editor’s comment:

“What we’ve actually done here is conflate some of the stream control functions with the application semantics functions in the interests of efficiency” – Martin Thomson 8/May/2014

This conflation of HTTP efficiency concerns into the multiplex framing layer has caused the draft to fail to meet it’s charter in several ways:

Headers are not multiplexed nor flow controlled.

Because the WG was chartered to “Retain the semantics of HTTP/1.1,” it was the rough consensus that http/2 must support arbitrarily large headers. However, in the interests of supposed efficiency, HTTP header semantics have been conflated with the framing layer and are treated specially so that they are not flow controlled and they cannot be interleaved by other multiplexed streams.

Unconstrained header size can thus result in head of line blocking (if for example a large header hits TCP/IP flow control), which is a concern that was explicitly called out by the charter. Even without TCP/IP flow control, small headers slowly sent, can hold out other messages from initiating, progressing and/or completing.

While large headers are infrequently used today, the lack of flow control and interleaving of headers represents a significant incentive for large data to be moved from the body to the header. History has shown that in the pursuit of performances, protocols will be perverted! So not only will this likely increase the occurrence of head of line blocking, it is an encouragement for to misuse the protocol, which breaks one of the two primary goals of the charter.

Websocket semantics are not supported.

While the WG was chartered to “coordinate this item with: … * The HYBI Working Group, regarding the possible future extension of HTTP/2.0 to carry WebSockets semantics”, this has not been done to any workable resolution. The conflation of the framing layer with HTTP means that it cannot operate independently of HTTP semantics and there is now uncertainty as to how websocket semantics can be carried over HTTP2.

An initial websocket proposal was based on using the existing DATA frames, but segmentation features needed by websocket were removed as they were not needed to support HTTP semantics.

Another websocket proposal is to define new frame types that can carry the websocket semantic, however this suffers from the issue that intermediaries may not understand these new frame types, so the websocket semantic will not be able to penetrate the real world web. Upgrading intermediaries is a slow and difficult process that will never complete.

Yet another approach has been proposed and has been mentioned in this thread as a feature. It is to use replace long polling with pushed streams. A HTTP2 request/response stream is kept open without any data transfer so that PUSH_PROMISE frames can be used to initiate new streams to carry websocket style messages server to client in pretend HTTP request/responses. This proposal has the benefit that by pretending to be HTTP, websocket style semantic can penetrate a web that does not known about the websocket semantic. However, this is also the kind of protocol abuse that the WG was chartered to avoid.

Instead of simply catering for the websocket semantic, the solution has been to come up with an even more tricky and convoluted abuse of the HTTP semantic for two way messaging.

Priority is client side consideration only

The entire frame priority mechanism is focused not only on HTTP semantics, but on client side priorities. The consideration has only been given to what resources a client wishes to receive first and little if any consideration has been given to the servers concerns for maximising throughput and fairness for all clients. The priority mechanism does not have widely working code and is essentially a thought bubble waiting to see if it will pop or float.

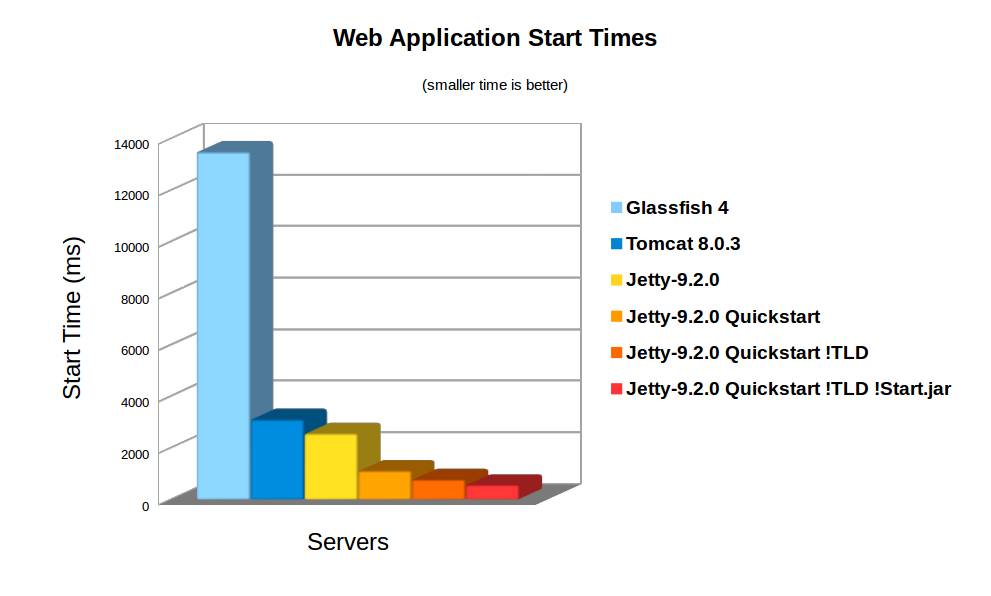

My own server (Jetty) currently plans to entirely ignore the priority mechanism because it is expressed in a style and at a point that it is entirely too late for the server to retrieve any substantial resources already committed to a low client priority resource. EG. If the server has launched an SQL query and is currently converting the data from a cursor into HTML, it serves no purpose to subsequently tell it that the client thinks it is a low priority data stream and would prefer other resources first. The server has committed thread, buffers, and scarce DB connections to the request and it’s priority is to complete the response and recover those resources.

In summary

The draft as presented does represent a consensus of the WG, but also a poor technical compromised between conflicting goals set by the charter. While the performance goal appears to have been well met (at least for the client side web traffic), the protocol does not remove incentives nor avoid the need for protocol misuse, which ultimately may then end up compromising the performance goals. I would suggest that this is a result of insufficient clarity in the charter rather than a poorly executed WG process.

Further, I believe that by creating a proposal that is so specific to the HTTP semantic we are missing an opportunity to create a multi semantic web, where all traffic does not need to pretend to be HTTP and that new semantics could be introduced without needing to redeploy or to trick the web intermediaries and/or data centre infrastructure.

Unfortunately we are well along the path of deploying http2: many if not most browsers have support for it; server implementations are available and more are on their way; several significant websites are already running the protocol and reporting benefits; intermediaries can mostly be bypassed by the forced use of TLS (many say the misuse of TLS) and the experts are exhausted from continual trench warfare on core technical issues.

I don’t think that http2 is a genie that can’t easily be put back in the bottle, nor can it be polished much more than it is, without a change of charter. Thus on balance I think it should probably be made an RFC. Perhaps not in the standard track, but eitherway, we should do so knowing that it has benefits, compromises, failures and missed opportunities. We need to work out how to do better next time.